Mark Tracy

PREFACE

“Waiter, there’s an Ant in my Pi!”

Imagine an ant in a three-dimensional universe (2 spatial dimensions and time) that is forced to march from left to right along the statement “pi = 3.1415…” (where the digits are written out eternally) from an arbitrary starting position. Suppose the ant has all of our notions of mathematics, though any symbols it may use to represent the same mathematics in its three-dimensional universe would in all likelihood be different from the symbols we use in our four-dimensional universe.

To the ant, the progression of events it experiences on its march would appear to be random at first, but certain patterns would begin to emerge. It might begin to figure out that the different repeating experiences it has seem to represent numbers, though in a different symbology. The sequence might take on a seemingly permanent order during portions of the march. For example, the digits 0 and 1 may alternate a trillion times at some point in the sequence. We might imagine that the ant was dropped into that repeating sequence as its starting position, such that that pattern is all it has ever known. Eventually, though, that order would terminate, since the digits of pi are non-terminating and not ultimately repeating (i.e. it never settles into a sequence that repeats infinitely).

The clever ant might be able to sift through these appearances of illusory order and determine what it may call a “true” interpretation of the pattern in terms of the ratio of a circle’s circumference to its diameter. Here “true” should be taken to mean that when tested it always predicts the next digit accurately. Someone “apart” from this three-dimensional universe (for example, a human in our four-dimensional universe) who could see the beginning of the statement and was familiar with the symbols would trivially understand it to be a highly nonrandom and predictable sequence that the ant is experiencing. But the ant could only ever be satisfied with the interpretation, or model, that it has, which at best will never have yet failed. Yet even when it has landed on a model that has never yet failed, the ant knows that its previous models eventually failed or proved to be only a piece of the larger pattern. This ant represents humanity.

Note that I am not saying that the universe has a potentially comprehensible, fully determinate nature, as we say the digits of pi do in the above allegory. But I am saying that even if it does, we are epistemically in the position of the ant, never even potentially able to know whether it does.

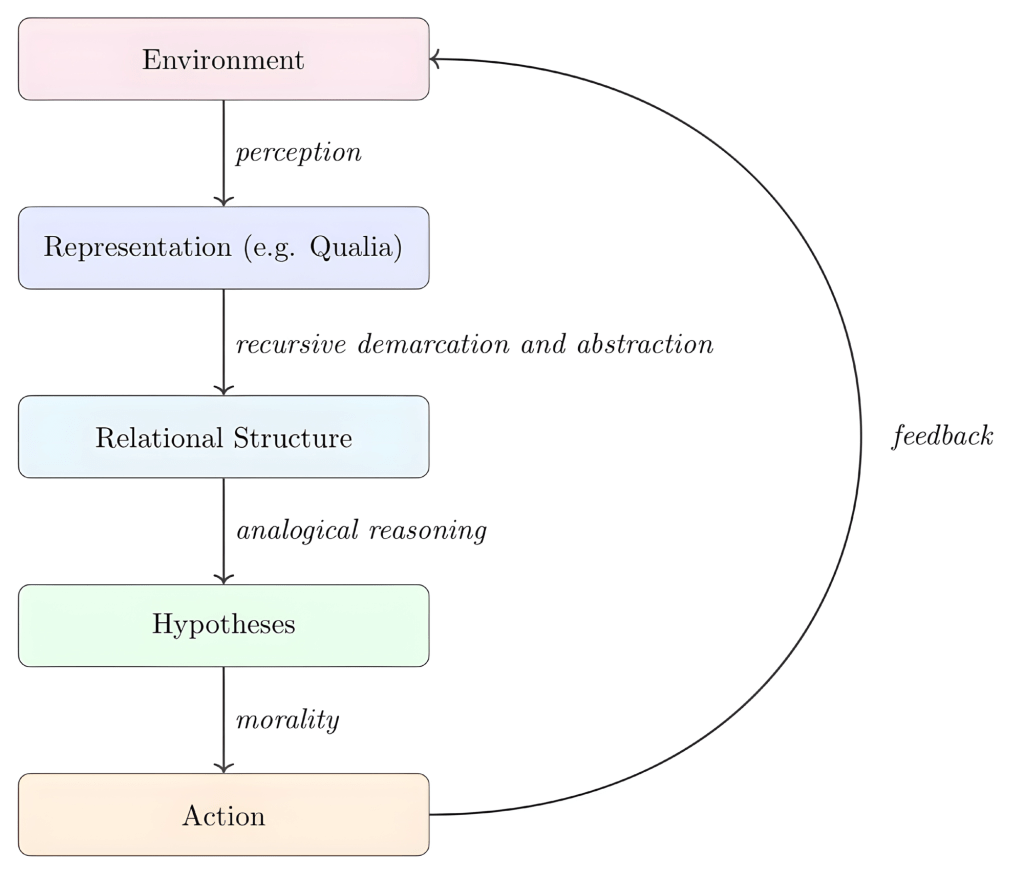

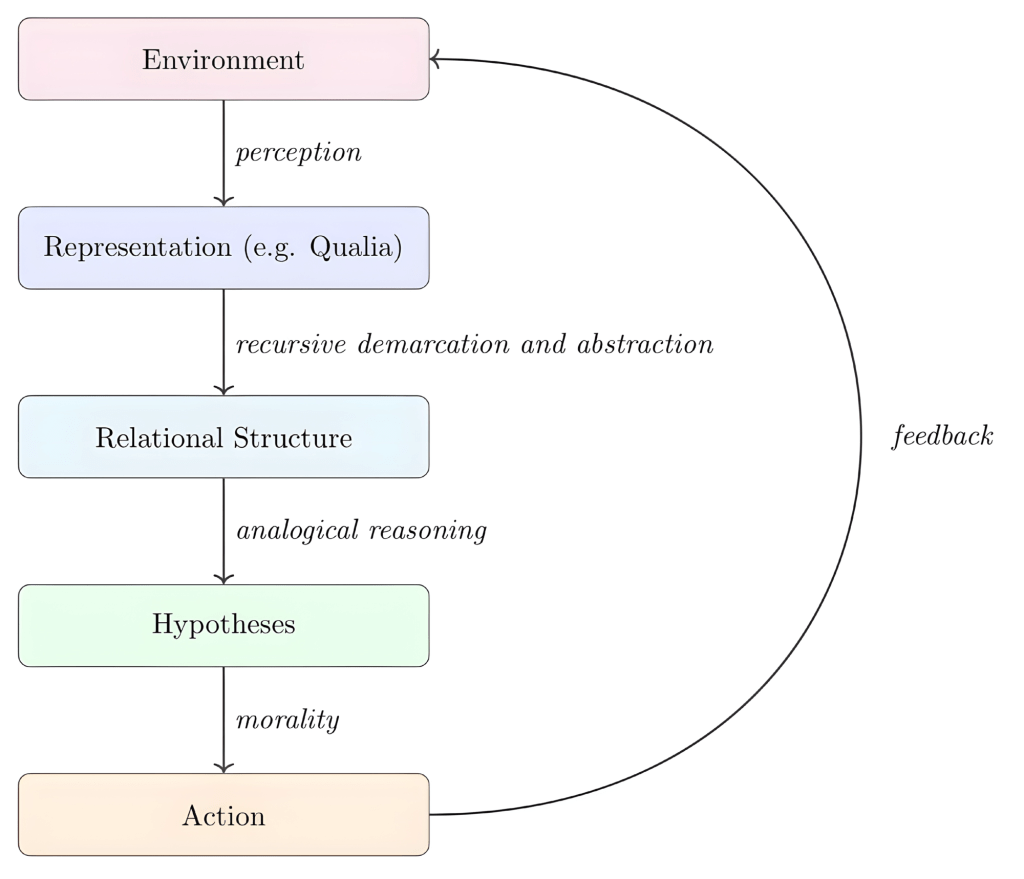

A GUIDING DIAGRAM

The Gordian Knot

This diagram is a map of a project I have been building for several years. Each node names something I have tried to understand carefully—not merely as a concept to gesture toward, but as a structure to formalize, interrogate, and trace back to its foundations. The papers linked below are the results of that effort. This article is their shared introduction.

The central obsession of the project, if I had to name it in a single question, is this:

What is the minimal structure necessary for a mind to make sense of anything at all?

Each paper approaches that question from a different direction. Together they form a stack, beginning with the most primitive conditions of intelligibility and extending upward through cognition, learning, institutions, and action.

THE FOUNDATION

Before a mind can perceive, represent, or reason, something more primitive must already be in place.

In “What Is Prior to Time and Space?”, I argue that even our most basic physical notions—time, space, before and after, here and there—are not ontological primitives. They presuppose something deeper: the simultaneous holding of unity across difference and difference amidst unity.

I call this primitive unity-in-difference.

Two orientations of this primitive appear everywhere in our representations of the world:

Demarcation — the holding of difference atop unity.

Abstraction — the holding of unity atop difference.

These are not independent operations but co-arising aspects of the same underlying structure. They make it possible to distinguish things while still understanding them as belonging to a shared whole.

To ask what is prior to time and space is therefore not to ask what came before them in a temporal sense. It is to ask what must already be possible for questions of ordering, locating, and relating to arise at all.

THE COGNITIVE PRIMITIVE

If unity-in-difference is the ontological foundation, then analogy is its cognitive expression.

In “On Abstraction and Analogy”, I develop a formal theory of analogy building on Gentner’s structure-mapping framework, with one crucial addition: every analogy is mediated by an abstract domain that both source and target instantiate.

To recognize an analogy is therefore not merely to say “A is like B.”

It is to say:

A is like B because there exists a higher-order domain of which both A and B are instances.

Analogy is thus not fundamentally a two-place relation but a three-place one. The third place is abstraction itself—the shared relational structure that both domains realize.

Through analogical reasoning, a mind transfers structure across domains of experience, generating hypotheses about unfamiliar situations based on familiar patterns.

ENTRAINMENT TO THE WORLD

A mind capable of abstraction and analogical reasoning does not develop in a vacuum. It becomes entrained to the structure of its environment.

In “Human Temporal Metacognition: An Explanatory Hypothesis”, I examine one particularly important environmental signal: the quasi-periodic rhythms produced by the interaction of the Earth, Moon, and Sun.

The solar day, lunar phase cycle, solar year, and circadian rhythm create a system of repeating yet incommensurate cycles whose relative phases continually drift. For an observer embedded within this system, the ability to demarcate intervals and abstract recurring patterns becomes highly advantageous.

From this interaction between mind and environment emerge:

- counting

- calendars

- historical records

- continuous models of time

Human temporal cognition, on this view, is not simply an innate faculty but a response to a structured dynamical environment.

THE AGENT

All of the preceding discussion implicitly presupposes an agent capable of performing these operations.

In “Systems”, I develop a general formal framework for representing any system in terms of inputs, outputs, state, and dynamics. This framework applies equally well to mechanical systems, biological organisms, and cognitive agents.

But the inquiry eventually turns back on the modeler.

If I am myself a real system, can I coherently represent myself as one?

The answer I arrive at is that a subject always describes the world from the inside. The agent and environment are not independent objects but mutually constituting processes interacting through feedback.

I call this process the Becoming-Held-As-By: the transformation of potentiality into existence through representation by a subject.

ENGINEERING THE PRIMITIVE

If analogy is the cognitive primitive underlying reasoning, an obvious question follows:

Can we build systems that replicate it?

In “Recursive Relational Abstraction (RRA)”, I propose a framework for designing machine learning systems that reason analogically.

The core strategy is recursive. A problem is:

- decomposed into primitive components,

- represented in abstract form,

- grouped into domains,

- analyzed for relations within domains, and then

- analyzed for relations between those relations.

This twice-recursive structure—relations between relations—is exactly what the formal theory of analogy demands.

Interestingly, several state-of-the-art models on analogical reasoning benchmarks turn out to instantiate this framework.

INSTITUTIONALIZING THE PROCESS

Reasoning does not occur solely within individual minds: it is intersubjective.

In “The Imagination Machine: A Formal Model of Institutional Epistemology”, I extend the framework to the level of institutions. Knowledge evolves through structured dialogue among agents operating over a shared corpus of ideas.

Agents propose compressions of accumulated reasoning. Institutions filter and transmit those compressions. Feedback from the external world evaluates the results.

Two structures evolve simultaneously:

- the reasoning under discussion

- the evaluative procedures by which that reasoning is filtered and transmitted

The resulting system models institutional learning as a recursive process of dialogue, compression, and feedback.

ACTION UNDER CONSTRAINT

A system that perceives, abstracts, reasons, and hypothesizes must eventually act.

In “The Moral Principle of Action–Motivation”, I propose an augmentation of Kant’s categorical imperative. The key idea is that no maxim concerning actions alone can be universally coherent. In sufficiently extreme circumstances, almost any action might be justified.

What can be universalized instead is the tuple of an action and its motivation set: the minimal collection of anticipated consequences that, if believed irrelevant, would cause the agent not to take the action.

Morality, on this account, concerns not only what one does, but why one believes those actions matter.

THE KNOT

The diagram we started from (also included below) is the shared skeleton of these papers.

The process loops because action feeds back into the environment that shapes perception, beginning the cycle again.